28 Apr 2015

Needed me a SaltStack test environment with a flexible number of minions. However, after a few quick searches there wasn’t much around that fit this bill. What follows here, then, is a Vagrantfile, minion.conf, and bash file to build this environment. In gist form:

References

http://humankeyboard.com/saltstack/2014/saltstack-virtualbox-vagrant.html

05 Apr 2015

This started as an idea, a motion to put the user back into the user group. This is a great idea, and having taken on, and attempted to assist 5-ish folks in the first round, I learned a lot, and want to not only do this again, but want to make it a more frequent, ongoing sort of thing.

That is, beyond just mentoring someone short term, say over a few weeks, or until their next speaking engagement. Instead I’d like to help a bit longer term, and more broadly reaching. So here it goes:

The Program

Not sure if calling it a program right, but alas. Over the next quater or so, I’ll provide unlimited email support, and as much Skype / phone support as I can handle. At a minimum this means: 1 hour a week on Skype (or phone) to talk about where you’re at, what you’ve worked on, or are working on, etc. These are 1 - to - 1 mentorships, that is, you and I working on helping you get to that next step.

Class Q2 - 2015

Class (if we can call it a class, a huddle, a gander, etc?), not really sure what to call it, the name isn’t as important as what it represents. It represents a group of five individuals at some point along their career path that want some help doing that ‘next’ thing.

You

Looking to do that ‘next’ thing. Have at least an hour a week for a Skype (or G+, or regular old phone call) to chat. Have a willingness or the ability to find more time in a week to work on that ‘next’ thing.

That’s pretty much it. There are no qualifiers other than “Don’t be an asshole”.

Me

Me, I do things. I’ve published a few books, I’ve worked with others to help them get down that path. I’ve spoken at some events, and again, have helped some others down that path. I helped start a podcast, which in turn has helped some folks take that next step. I also want to do more.

Selection Process

To be fair, I’m not sure this will get even the requsite 5 responses. As this is the second round and things aren’t exactly formal yet, I think that’s all there is too it. Be one of the 5. If there are more than 5 signed up, we’ll figure it out from there. If there are A LOT more than 5, I’ll let y’all know how it works from there.

Application

Here it is.

That’s all there is to it. Disclaimer: It’s a Google form, who’s only recipient is me (insofar as these things can be guaranteed).

26 Feb 2015

It’s that time again, wherein my borwser is eating all my memory and the tabs need to be closed.

That’s it this time around.

20 Feb 2015

What? Camp who?

I like coffee. A lot. As spring approaches, I am also gearing up to head back outdoors. Camping and coffee don’t always get along, however. That is, you can make some really good coffee when doing “plop and drop” camping, but if you’re reducing the amount of kit you carry, your options start to get really limited.

With that in mind, I decided to take on the ‘Camp Coffee’ problem by well… trying all the coffee. For Science!

Camp Coffee Showdown

There were about 6 rounds involved in this, ranging from instant to stove top. Each produced hugely different results and what follows are my experiences with each. You’ll note the ‘stove top’ was used here, as conditions outside weren’t conducive to fire construction.

The rounds:

- Taster’s Choice

- Folgers Instant

- Starbucks VIA

- Percolator

- Cowboy / Turkish

- Mokka

Note: Before we get too deep into this, I left out some of the standard options, travel french press, aeropress, etc. While you can pack those, they’re also more or less known quantities / qualities.

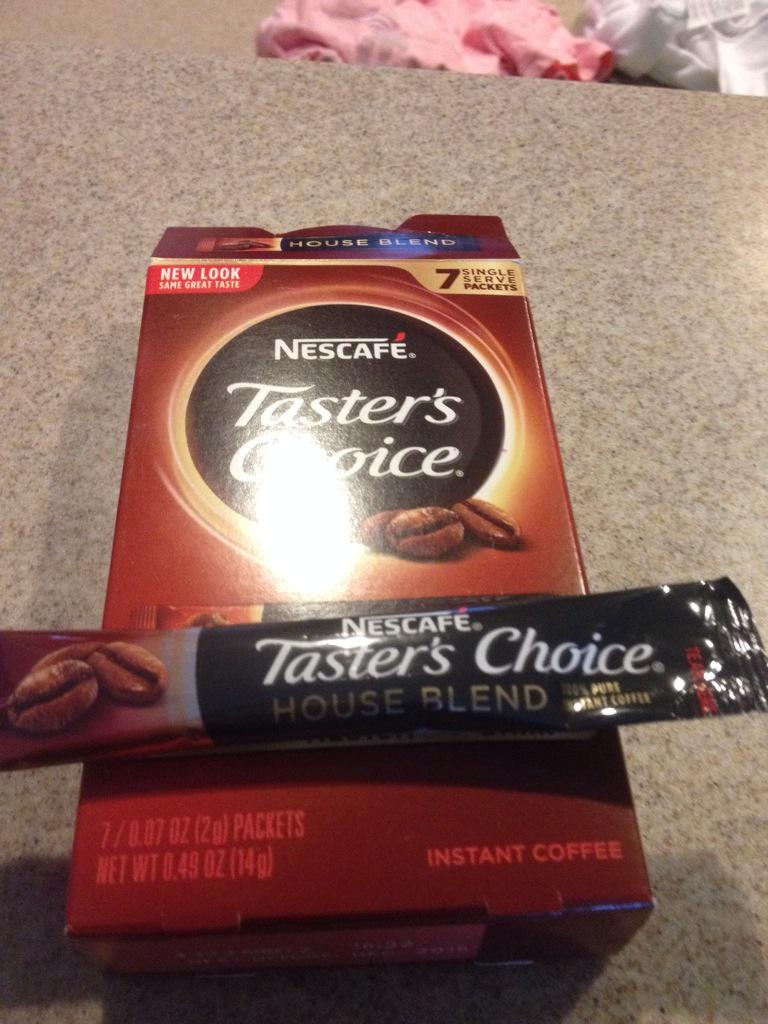

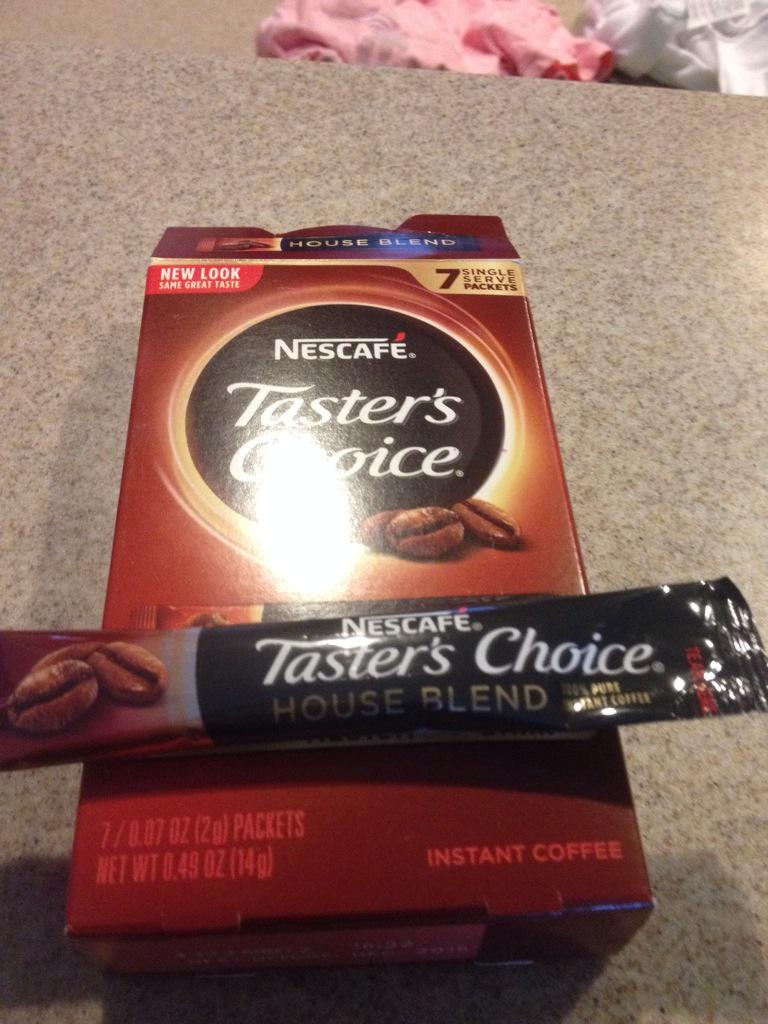

Camp Coffee Round 1 - Taster’s Choice

Yes it’s instant coffee. It is also everything that is wrong in the world, in the universe, all bundled up into one little package of crystallized hate. I mean, I suppose it’s coffee, if you like aromatic gym socks and hints of industrial cleanser. This was the only one in the round up to make me spit and pour it out as fast as I could.

Quality: None. There was no quality here. Unless you are trying to get an oil stain out of your driveway I guess.

Trouble: All of it. All the trouble.

Camp Coffee Round 2 - Folgers Instant

This stuff is magic. That is, after the old armpit socks from the last round, it was amazing how much like Folgers this tasted. Not sure if that is a compliment or not, but well, it was tolerable.

Quality: Only if I can’t find VIA

Trouble: None

Camp Coffee Round 3 - Starbucks VIA

Nothing fancy here. Hot water, Coffee Powder, Stir. It is the stir part that will get you. Unlike the other two ‘instant’ coffees in the round up, this one uses a ‘micro-grind’ of sorts. Like cowboy below, don’t drink the last sip.

Quality: Decent, bordering on good

Trouble: None

Camp Coffee Round 4 - Percolator

The coffee snob in me is almost ashamed of having done a percolated pot. Yes, it’s an American coffee staple. Yes, it’s what I grew up on. Yes, it brings back the memory of that amazing trip to Bear Den campground in North Carolina where I brewed my parents a cup of coffee, and completely forgot the water. Apparently one can burn coffee.

The flip side of this is: I could totally see bringing a fire or stove top pot on a trip if I had a smaller one in my arsenal.

Quality: Alright

Trouble: Don’t forget the water.

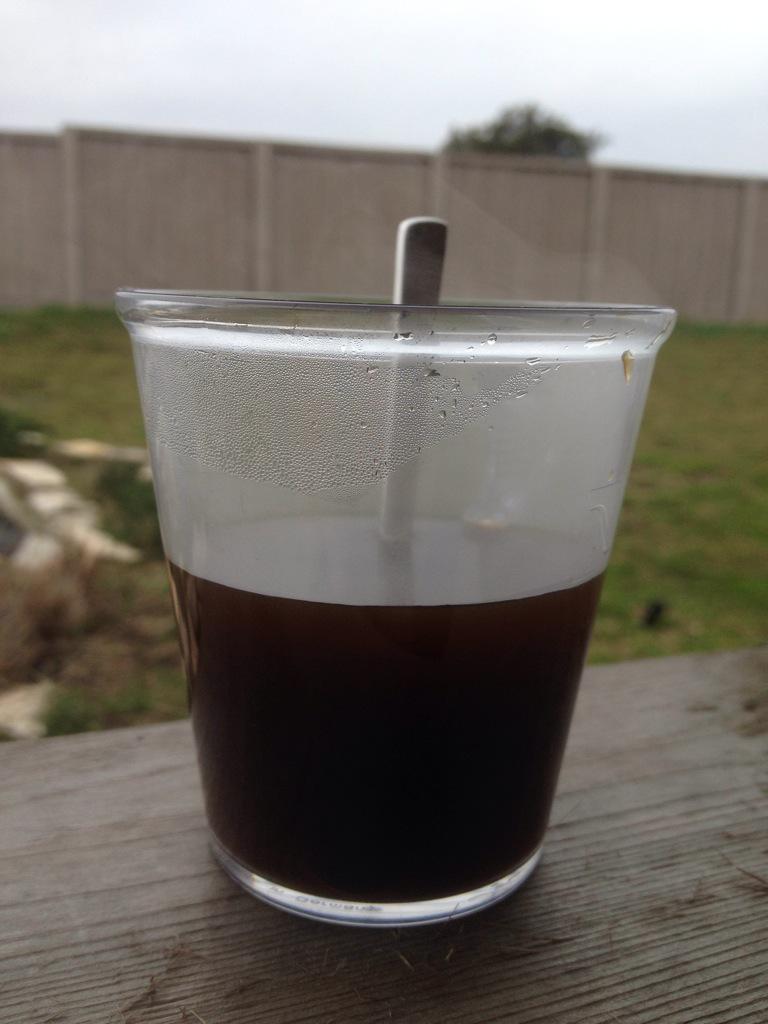

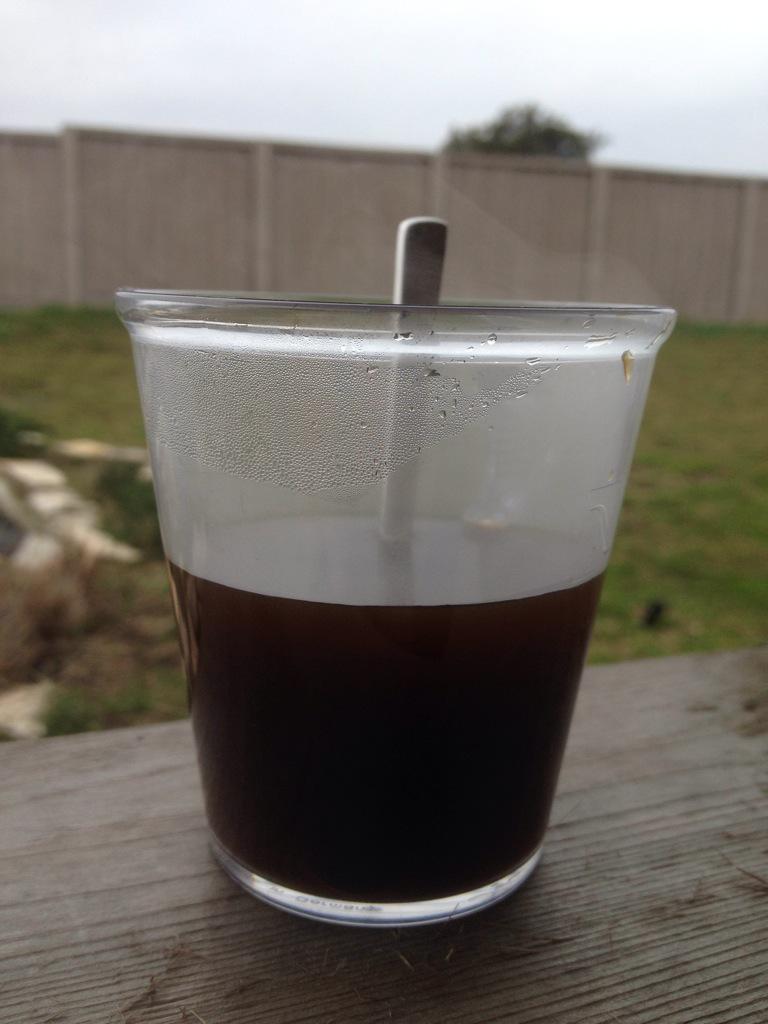

Camp Coffee Round 5 - Cowboy / Turkish

So I call this Cowboy rather than Turkish, as well, they’re prepared almost the same: powdered coffee grounds, boiled in the water a few times. The differentiation here, is that Turkish generally calls for an almost equal amount of sugar to go with it. Brewing it was fast, but it was still a bit of trouble that is, having to schlep the grinder and the little Turkish pot thing. It was also gritty as heck. The slurry at the bottom should only be consumed if you need real ultimate power.

Quality: Good

Trouble: Medium

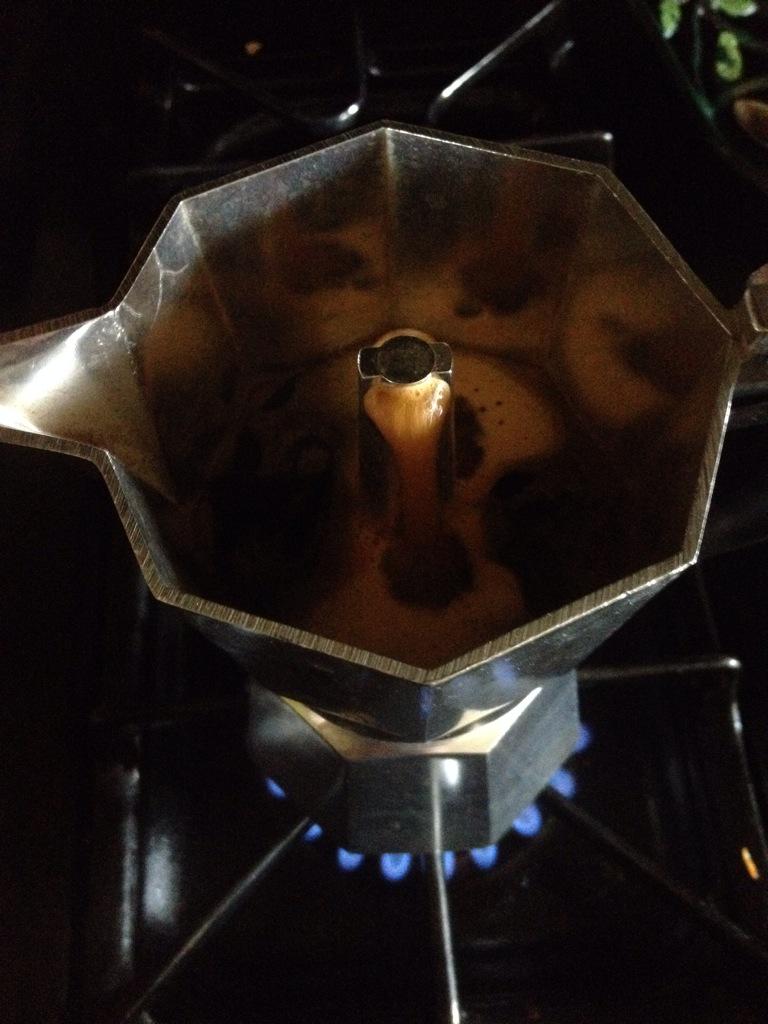

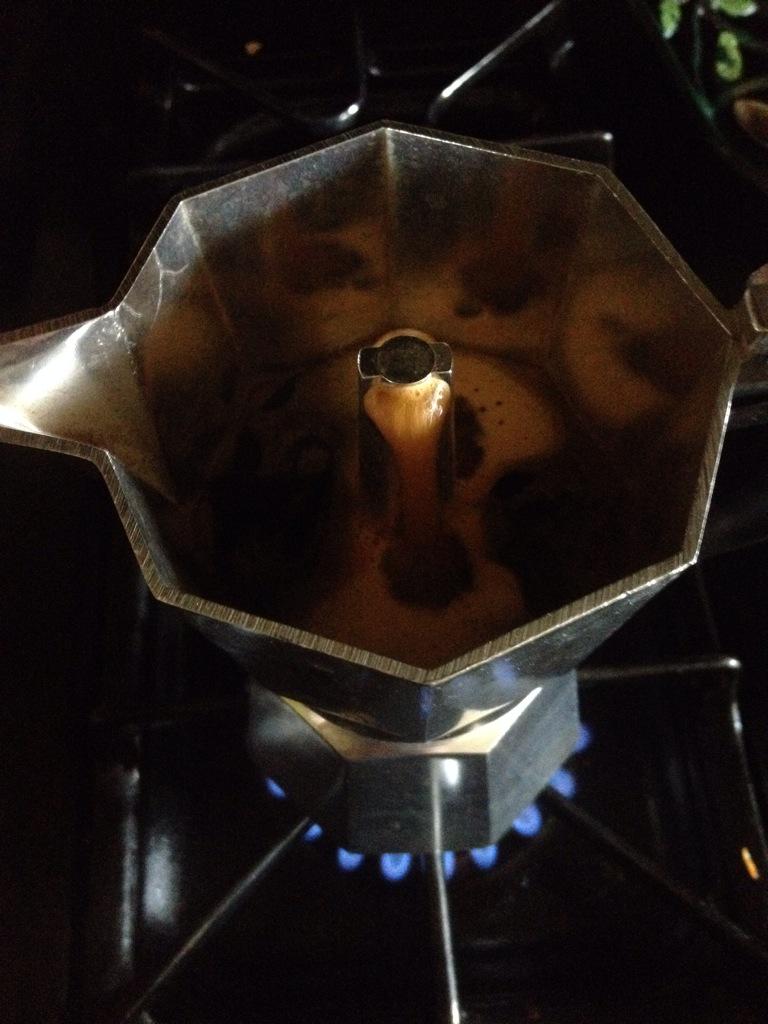

Camp Coffee Round 6 - Mokka

The stove top Mokka pot. This along with the percolator in round 4 is how I grew up drinking coffee. At my grandfather’s house it’d be called “black” coffee and brought out around the holidays or when he was playing cards out by the pool with his buddies. It was often mixed with Sambuca around the holidays. I knew what to expect on this one. It was added to get an idea of the time vs trouble. It was a lot trouble, btw. Good for a plop and drop setup, but not so much if you need mobility.

Quality: Great

Trouble: A lot

Camp Coffee Summary

Let it not be said there are not consequences to drinking 6 cups of coffee in less than an hour. With that, I’ll likely go this season with either an Aeropress, Cowboy, or Via depending on packing requirements.

09 Jan 2015

Before we get started, take a gander at my last few posts on this, here and here.

The idea here is roughly the same. That is, build a small, basic ‘base’ profile, template, state, or whatever, that has some simple hardening bits applied. The idea being to give you a reasonable start in turn letting you apply additional layers down the road.

The reason you’d move away from doing this with OpenStack Orchestration (Heat) and into a config management tool is that it allows you to apply the same practices more generically. That is not everyone runs OpenStack, but lots of folks are moving to some flavor of configuration management tool, if they weren’t there already. You can then include these SaltStates in Heat or whatever orchestration tool of choice.

Basic Hardening with SaltStack

For the sake of not making this a huge-tastic blog post, we’ll skip the part where I explain the what and how of getting started with SaltStack. Many others have done better than I. What follows is my top.sls, secureserver.sls and sysctl.sys files.

top.sls

This file controls what Salt minions get what ‘states’. For securing all the servers, that’s pretty straight forward. I would like to call out, however, the ordering of the states:

base:

'*':

- sysctl

- secureserver

This defines a ‘base’ state that will match for all Salt minions. (Minion is Salt for agent.) It then states to apply first the sysctl state, then the secureserver state.

sysctl.sls

There isn’t much fancy to this file. In fact, it’s more or less a YAML formatted version of what you’d throw into /etc/sysctl.conf. What the lines below do is turn off IP routing, ignore broadcasts, responses, and all manner of other fun icmp stuff.

net.ipv4.icmp_echo_ignore_broadcasts:

sysctl.present:

- value: 1

net.ipv4.icmp_ignore_bogus_error_responses:

sysctl.present:

- value: 1

net.ipv4.tcp_syncookies:

sysctl.present:

- value: 1

net.ipv4.conf.all.log_martians:

sysctl.present:

- value: 1

net.ipv4.conf.default.log_martians:

sysctl.present:

- value: 1

net.ipv4.conf.all.accept_source_route:

sysctl.present:

- value: 0

net.ipv4.conf.default.accept_source_route:

sysctl.present:

- value: 0

net.ipv4.conf.all.rp_filter:

sysctl.present:

- value: 1

net.ipv4.conf.default.rp_filter:

sysctl.present:

- value: 1

net.ipv4.conf.all.accept_redirects:

sysctl.present:

- value: 0

net.ipv4.conf.default.accept_redirects:

sysctl.present:

- value: 0

net.ipv4.conf.all.secure_redirects:

sysctl.present:

- value: 0

net.ipv4.conf.default.secure_redirects:

sysctl.present:

- value: 0

net.ipv4.ip_forward:

sysctl.present:

- value: 0

net.ipv4.conf.all.send_redirects:

sysctl.present:

- value: 0

net.ipv4.conf.default.send_redirects:

sysctl.present:

- value: 0

secureserver.sls

This file, unlike the sysctl bits, actually does some install and config bits. If you’ve read the post I mentioned at the beginning of this post, you’ll recognize the packages being installed.

The configure bits are two fold: 1) We configure logwatch using a file hosted on the Salt master; 2) We configure IP tables to allow ssh and deny all the other things:

iptables:

pkg:

- installed

denyhosts:

pkg:

- installed

psad:

pkg:

- installed

fail2ban:

pkg:

- installed

logwatch:

pkg:

- installed

aide:

pkg:

- installed

/etc/cron.daily/00logwatch:

file:

- managed

- source: salt://cron.daily/00logwatch

- require:

- pkg: logwatch

ssh:

iptables.append:

- table: filter

- chain: INPUT

- jump: ACCEPT

- match: state

- connstate: NEW

- dport: 22

- proto: tcp

- sport: 1025:65535

- save: True

allow established:

iptables.append:

- table: filter

- chain: INPUT

- match: state

- connstate: ESTABLISHED

- jump: ACCEPT

default to reject:

iptables.append:

- table: filter

- chain: INPUT

- jump: REJECT

Summary

In this post we’ve covered how to do some very very basic hardening of your server using SaltStack. This likely wont work for all circumstances (like if you’re going to actually run nginx or apache, you need to add those ports accordingly).

Resources

08 Jan 2015

Today’s link dump is brought to you by Google Chrome and my poor swap file.

07 Jan 2015

Sometime back in November, I received an email stating that I had been nominated, by the OpenStack community, to run for an Individual Board Member position. It was very shortly thereafter I had the 10 needed nominations to get on the ballot. I was super excited at the prospect, and am super humbled that I’d even be considered.

Let me say that again. I am incredibly humbled that the community reached out and hopped on to support my nomination.

I repeat that, because at this time, I’m deciding to back out of the election for two reasons. First and foremost, family considerations. Due to unforeseen family circumstances, I need to take a few steps back from the various things I am involved in for a while.

| My second reason for backing out, is the entry of Egle (@eglute |

AnyStacker) into the elections. Having worked closely with Egle on a number of workshops, books, and work projects over the last few years, I can say that y’all will be in great hands if she’s elected. |

Thank you again for your all your support. Mayhaps next time.

30 Dec 2014

With the new year upon us, a lot of you will likely make some manner of commitment to be better at handling communications, process email, and in general, get things done.

Here’s a system I’ve built / adapted from others who are much more effective at email than I am. The system in general has helped reduce stress and help me focus on and engage better with the parts of email that matter. Because I am lazy, it’s also super simple and automated to a degree. I am not prescribing this as a fix to all of your email woes, rather, suggesting that like me, you read, learn, and adapt it to help improve how you handle email next year. (ZOMG RUNON)

It’s got some basic components, and because lists are a good SEO / Click-Bait thing, that’s how we’ll arrange it:

Unbreak Email

- 3 Folders

- 2 Times a day

- 1 Processing Rule

3 Folders

It’s actually two for processing and one for storing reference material. These folders are:

-> Inbox

|-> I'm Awesome

|-> Done

The basic workflow is that everything lands in the inbox, and gets processed into either the “I’m Awesome” (or Kudos, etc) folder or into the Done folder.

What is this “I’m Awesome” folder? It actually serves a few needs.

First and foremost it’s a tool to be used around review time. That is, you take any email where someone thanks you for a job well done, a contribution to a project, and other similar things, and place them here. If you do self-reviews, retrospectives, or other similar management things, it is handy to have this as a reminder of the contributions you’ve made over that time period, and if need be, remind The Man™.

Second, and no less important, is this folders ability to recharge your batteries. If you start to experience burn out, feel like you aren’t having an impact, and other similar feelings, looking back at this folder should remind you a bit about why you do what you do.

2 Times A Day

I generally check email twice a day. That is, across all accounts (gmail, provmware, work, etc). Twice a day.

This isn’t a hard and fast rule. That is, emergencies and other high priority things happen in life that necessitate checking with more frequency.

For things like collaboration, team communication, social, and more, there are more and better, near instant forms of communication.

During these processing times, process the “Inbox” folder first, as this contains everything that is addressed to me, or needs input. Giving these priority let’s you address the 20% of email that needs 80% of your attention.

Next, process the “Done” folder which will contain mostly automated emails and email list mails. In this case, the “One Rule” discussed next puts anything and everything that is not addressed directly to you into the Done folder. This is because in the majority of circumstances, mail coming to an email list or from an automated source is informative, but not of major consequence if missed or filed as “Done”.

As these emails in this folder are largely informative in nature, skimming them has worked well for me. Skimming strategies however, are best left for another post (or some manner of productivity expert).

1 Email Rule

The 1 rule to rule them all that I use to support all of the above:

“On incoming mail, where I am not in the TO: or CC: field, move to ‘Done’”.

This enables the bits above, and in one fell swoop, will reduce your load significantly.

26 Dec 2014

Apologies in advance if this is a bit more personal than technical. There is plenty more tech content coming, have no fear.

It’s the holidays WOOOOO! Well, maybe no seven O’s woo, but still, a good time nevertheless. On the Zen moments thing, about 6 years ago, my father told me this story, and designed the sticker you see above.

The story:

Five students of a Zen master was back from the market on their bicycles. As they dismounted, their master asked: “Why are you riding your bicycles?”

Each of them came up with different answers to their master’s query.

The first student said: “It is the bicycle that is carrying the sack of potatoes. I am glad that my back has escaped the pain of bearing the weight.”

The master was glad and said: ”You are a smart boy. When you become old you will be saved of a hunch back unlike me.”

The second student had a different answer: ”I love to have my eyes over the trees and the sprawling fields as I go riding.”

The teacher commended: “You have your eyes open and you see the world.”

The third disciple came up with yet a different answer: ”When I ride I am content to chant ‘nam myoho renge kyo’”

The master spoke words of appreciation: ”Your mind will roll with ease like a newly trued wheel.”

The fourth disciple said: “Riding my bicycle I live in perfect harmony of things.”

The pleased master said: ”You are actually riding the golden path of non-harming or non violence.”

The fifth student said: ”I ride my bicycle to ride my bicycle.”

The master walked up to him and sat at his feet and said: “I am your disciple!”

Having ridden a bicycle for a number of years, I have used it for various means and in various phases. Weight-loss, transportation, racing, harmony with nature, etc. However, over the last several years through varied events, dramas and the like, I have learned that in cycling: “I ride because I ride”.

During the holidays, one can get caught up in the presents, people, dramas, and the ever present exhaustion of well, the holidays. Over the years, I’ve been in all of the above situations and then some. This year, like in cycling, I am trying to “Holidays because I Holidays”.

Regardless of how, what, or why you get together this season, try to take a moment, sit back and enjoy them as much as you can.

If you also ride because you ride, and would like a sticker, either email me (bunchc at gmail) or ping me on twitter and we can arrange something.

05 Nov 2014

Session details here.

Speakers:

- Marek Denis - Research Fellow, CERN

- Steve Martinelli - Software Developer, IBM

- Joe Savak Sr. - Product Manager, Rackspace

- Brad Topol - Distinguished Engineer, IBM

In this presentation, we describe the federated identity enhancements we have added to support Keystone to Keystone federation for enabling hybrid cloud functionality. We begin with an overview of key hybrid cloud use cases that have been identified by our stakeholders including those being encountered by OpenStack superuser CERN. We then discuss our SAML based approach for enabling Keystones to trust each other and provide authorization and role support for resources in hybrid cloud environments.

Live Blog

Lots of different folks interested in identity federtion, Academia, companies, lots and lots of folks.

Use cases? - Easy to confiture, cloud bursting, central policy point, federating out, federating in. Keep the client small. No new protocols.

“Federate In” - You already have identity provider, SAML, etc Folks already have SSO / Identity. Federate allows for use of existing credentials to work with OpenStack MSP’s.

“Federate Out” - That is, you setup a trust between on prem and off prem clouds.

Cern’s Use-Case

Cern has 70,000 cores, they need more to process ALL the data they produce. This requires federation out allows folks to use pay-as-you-go to hire out additional resources as needed.

Cern also needs to be able to allow folks to federate in from others in science community.

Now an interlude for Keystone classic Auth.

Federated identity in Incehouse - Integrate existing tools, SAML, etc. There is a diagram, it has lots of arrows, the gist is you send SAML to keystone, keystone gives you a token, and things are good. This worked, but not as well as it could. Mapping engine, that is, groups in one system are not the same as groups in others. Woo Mapping: “IBM Regular Employees” –> “regular_canada” etc.

New diagram for Federation in Juno. A lot more arrows. This time around, Keystone is the provider, and will provide some level of attestation to the other Keystone in the trust relationship. Once the trust is in place, the user passes the token to either.

The SAML generator takes the token and goes backwards. Token –> SAML Generator –> SAML Assertion

Now we’re at a slide covering all manner of config data. Important bits: Mapping is still a thing. You also need to ‘prime’ the SAML assertion pump.

keystone-manage now has a metadata generation thing.

Back to Cern: - 2 datacenters, OpenStack Cells, Cells not popular. 40k users in AD, and 12k more ADFS (Federation). Cern uses SAML2, and will be the first OpenStack in the world to allow federate-in to allow external entities to consume their resources.

Patches to the OpenStack and Keystone clients.

Looking forward:

- Auth-N

- Horizon Integration

- More & Better Mapping

- Fine Grained ACLs

- More protocols